How AI Is Reshaping Own Damage Claims in Motor Insurance

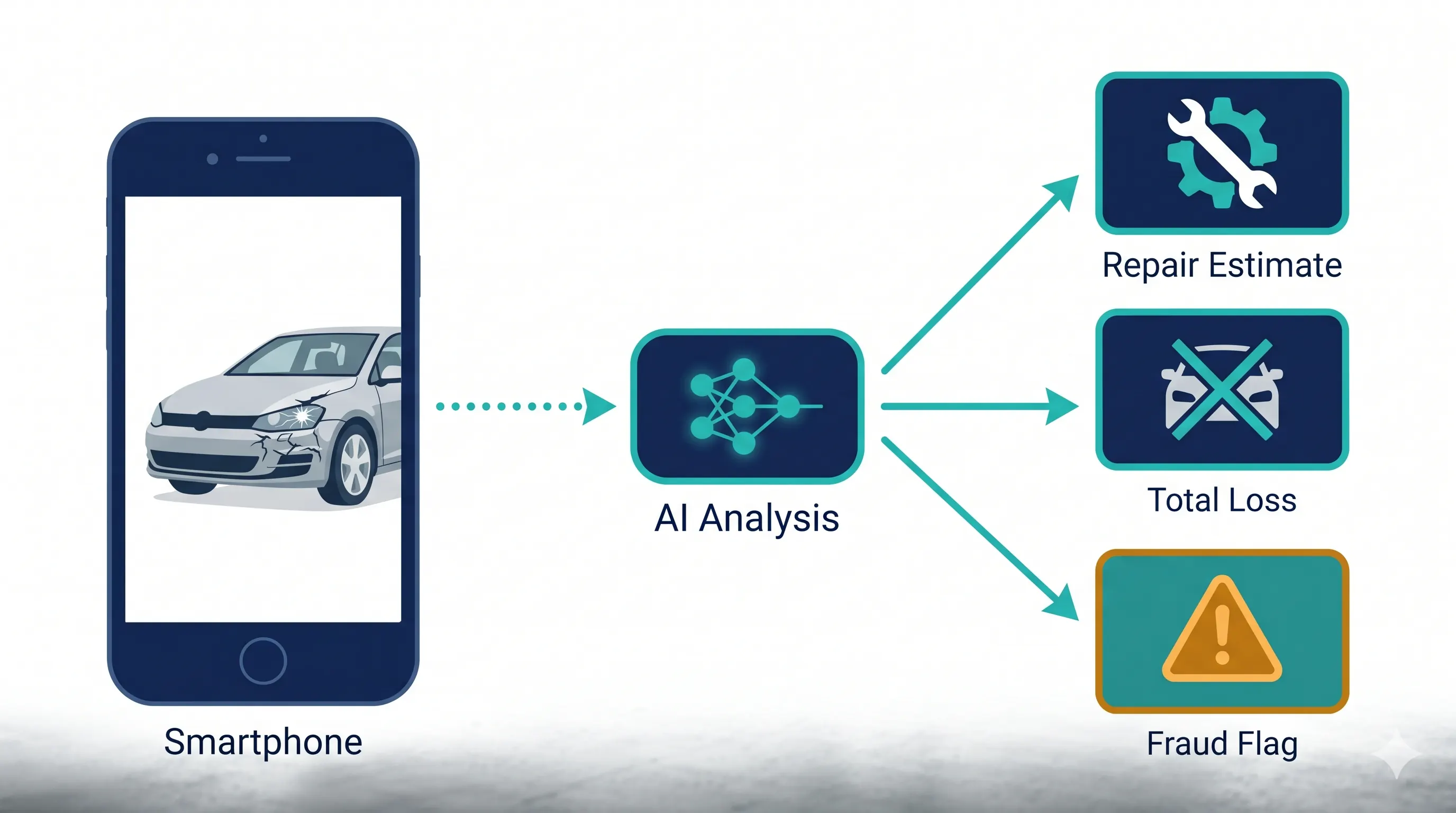

Own damage is where AI in motor claims has gone from pilot to production. From photo-based FNOL to computer vision damage assessment and image fraud detection, here's how carriers are turning weeks-long workflows into same-day settlements in 2026.

If you want to see what AI in motor insurance actually looks like in production, look at own damage (OD). It is the highest-volume, most repetitive, most image-driven segment of motor claims — and that combination has made it the single most fertile ground for automation. The carriers that have invested seriously in OD are settling routine claims in hours instead of weeks, and shifting their adjusters' attention to the cases where human judgment genuinely matters.

The OD Claim Has Become a Photo Pipeline

A typical OD workflow used to mean a phone call to FNOL, a scheduled inspection at a body shop or a drive-in center, a paper estimate, and a wait. The modern equivalent looks very different:

- The customer reports the claim through an app, web form, or conversational agent.

- The agent walks them through capturing a guided set of photos and a short video of the vehicle.

- Computer vision models classify damaged parts, score severity, and flag whether the damage pattern is consistent with the described incident.

- A pricing engine combines parts catalogs, regional labor rates, and historical claim data into an estimate.

- The system either issues a straight-through settlement, books a repair appointment with a network shop, or escalates to a human adjuster.

Industry reports describe carriers running this pipeline at scale — over 10,000 FNOL claims a month at straight-through processing rates above 70 percent, without adding headcount. Average claim cycle times for routine OD claims have collapsed from days to under an hour in well-instrumented operations.

The Computer Vision Layer

The technical core of all of this is computer vision, and the models have matured fast. Modern damage detection systems trained on millions of vehicle images can:

- Identify damaged parts at the panel level — bumper, fender, headlight assembly, quarter panel.

- Classify damage type — dent, scratch, crack, deformation, missing component.

- Estimate severity on a calibrated scale.

- Recommend repair versus replace at the part level.

- Detect prior damage, which is critical for both fraud and pre-inspection workflows.

Some commercial systems claim recognition across thousands of distinct damage combinations. The point is not the exact number — it is that consistency at scale is now achievable in a way that no human inspection network can match. Two adjusters looking at the same photo set will produce two different estimates; a well-trained model will produce the same estimate every time.

Total Loss Triage

A particularly high-value OD use case is early total-loss identification. The earlier a carrier knows a vehicle is a probable total, the sooner they can stop the customer from incurring rental costs, route the case to salvage, and offer a settlement. AI models compare estimated repair cost against vehicle valuation in real time, cross-reference with year, make, model, mileage, and trim, and flag likely totals from the FNOL photos themselves.

Total-loss frequency has been climbing for several years — driven by older vehicle fleets, more expensive sensors and ADAS components, and tighter repairability tolerances. That trend makes early, accurate total-loss triage one of the higher-leverage AI investments a motor carrier can make.

Image-Based Fraud Detection

Own damage fraud is dominated by a few specific patterns, and image-based AI is unusually effective against them:

- Photo manipulation. Forensic models check for tell-tale signs of edited images — inconsistent lighting, shadow direction, compression artifacts, and metadata anomalies.

- Image reuse. Hashing and similarity search detect photos that have appeared in earlier claims, sometimes across different carriers if data sharing arrangements exist.

- Prior damage included as new. Models compare submitted photos against pre-inspection imagery captured at policy bind to identify damage that pre-existed coverage.

- Inconsistent damage patterns. A frontal collision claim with damage only to the rear quarter is a flag that a model surfaces instantly.

In published benchmarks, automated detection of fraudulent or manipulated images has reached and exceeded 90 percent on test sets, far above what manual review of a high-volume queue can sustain. Even allowing for the difference between benchmarks and field performance, the operational impact on loss ratio is real.

Telematics Closes the Loop

Photos tell you what the car looks like after an event. Telematics and OBD-II data tell you what actually happened. Connected-car platforms and event data recorders provide objective signals — speed, deceleration, impact direction, time of day, location — that can corroborate or contradict the reported scenario. Combined with image-based assessment, this telemetry layer is making it materially harder to inflate, fabricate, or stage OD claims.

What Adjusters Now Do in OD

The honest description of the change: routine OD work is being automated. A clean rear-end collision with a single damaged bumper, photographed properly, validated against telematics, and priced against the parts catalog does not need a human adjuster touching the file.

What does need a human:

- Multi-vehicle incidents with disputed liability allocation.

- Severity edge cases, especially where ADAS calibration costs swing repair-vs-total decisions.

- Customer disputes over coverage, deductibles, or repair shop choice.

- Suspected fraud cases that need investigation beyond image forensics.

- Anything involving injury, which routes to a TPBI workflow with very different requirements.

In well-run carriers, OD adjusters are doing fewer files but more interesting ones — and operations leaders are reallocating headcount toward complex losses, fraud SIU, and customer experience roles where humans add disproportionate value.

The Bottom Line

Own damage is the part of motor claims where AI has clearly cleared the experimental phase. The technology stack — guided photo capture, computer vision, pricing engines, image forensics, telematics integration — is operational at scale, and the carriers using it well are pulling away on cycle time, loss ratio, and customer satisfaction. The strategic question for the rest of the market is no longer whether to deploy, but how quickly the gap can be closed.